Macmini M4 openclaw第一集:使用ollama和omlx架构对比分析(保姆级教程)

发布时间:2026-04-16 10:39:39 作者:人工智能我来了  我要评论

我要评论

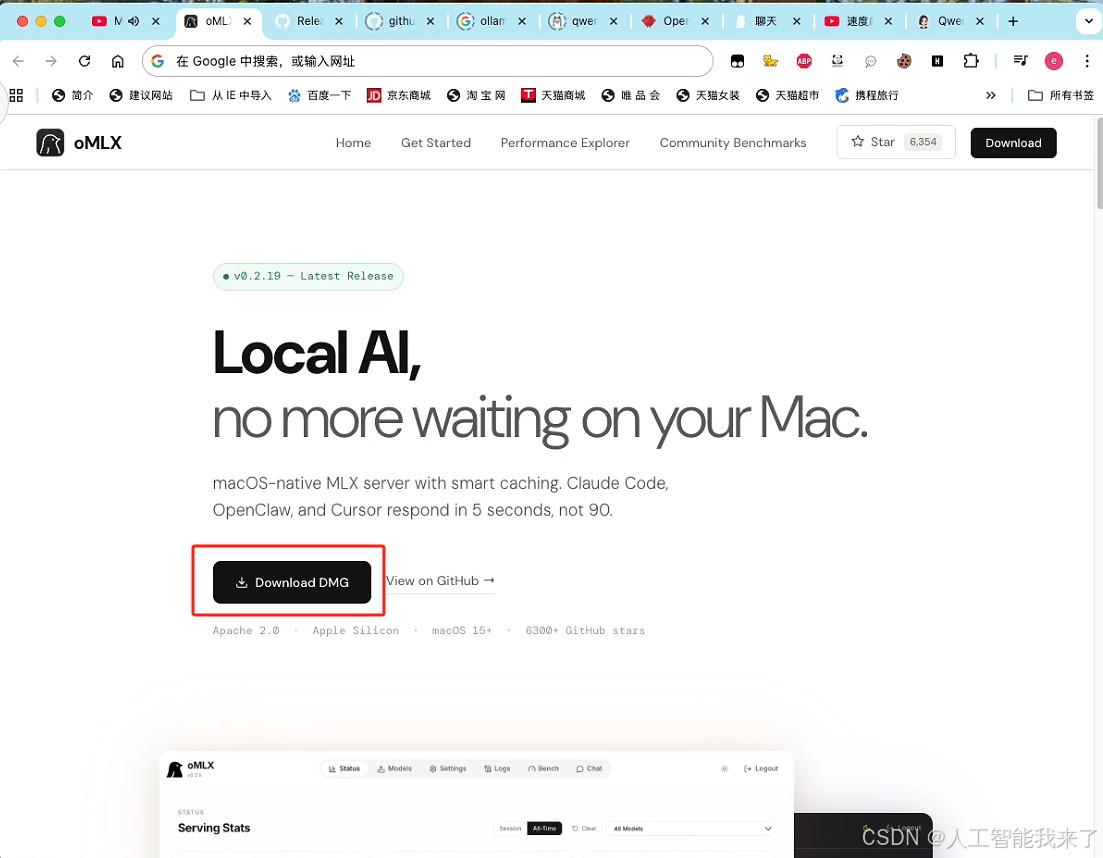

文章介绍了专为苹果macOS优化的oMLX框架,通过安装Home本地运行环境、依赖包和开源客户端面板,实现在Mac上上跑本地模型,并通过配置模型和启动gateway达到加速效果,实测显示速度提升接近1倍,几乎实现秒级响应,感兴趣的朋友一起看看吧

介绍专为苹果 macOS 深度优化的 oMLX 框架,让你的本地模型运行速度直接飙升好几倍 !全网最保姆级教程,干货满满,千万别错过!

1.选择 Mac mini 跑本地模型?静音与功耗优势

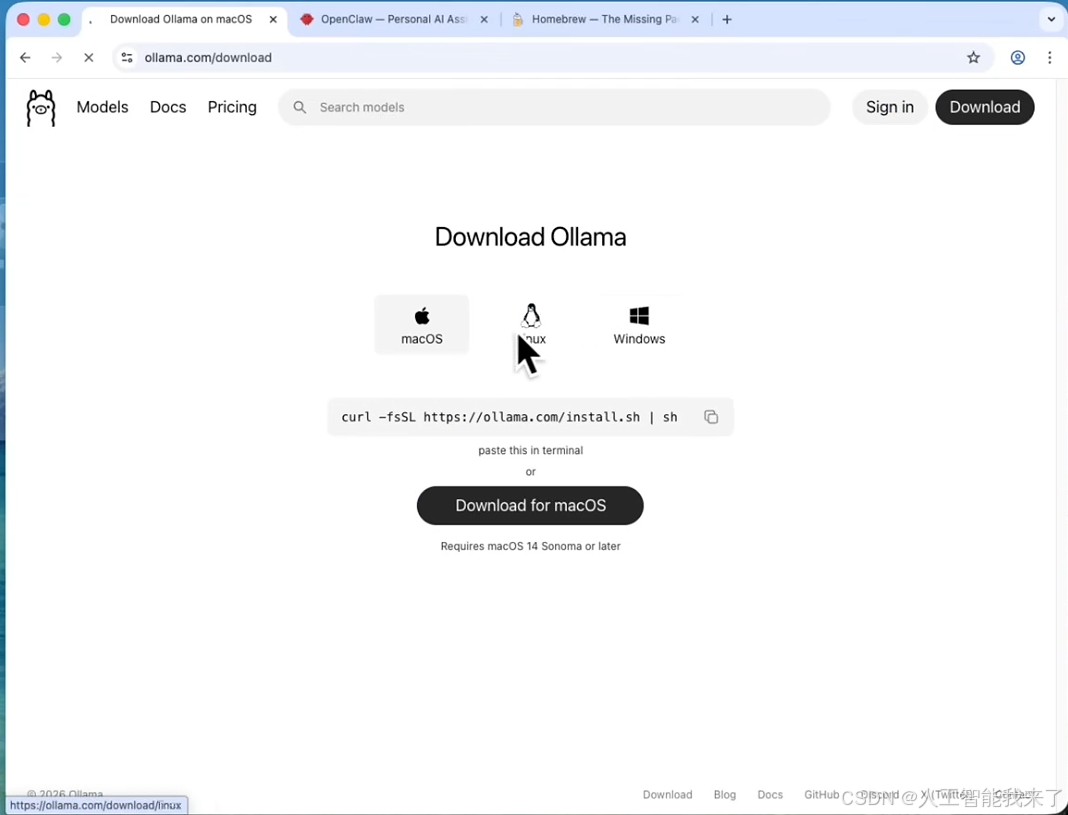

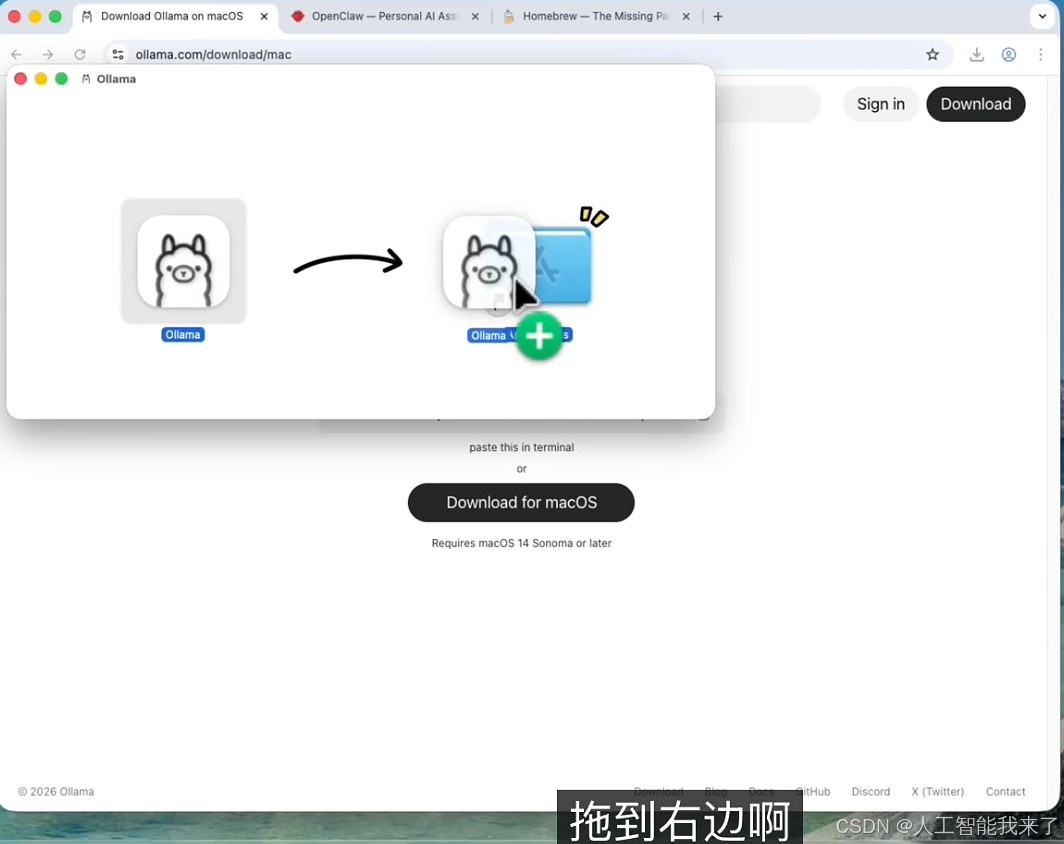

2.下载并安装 Ollama 本地运行环境

https://ollama.com/download

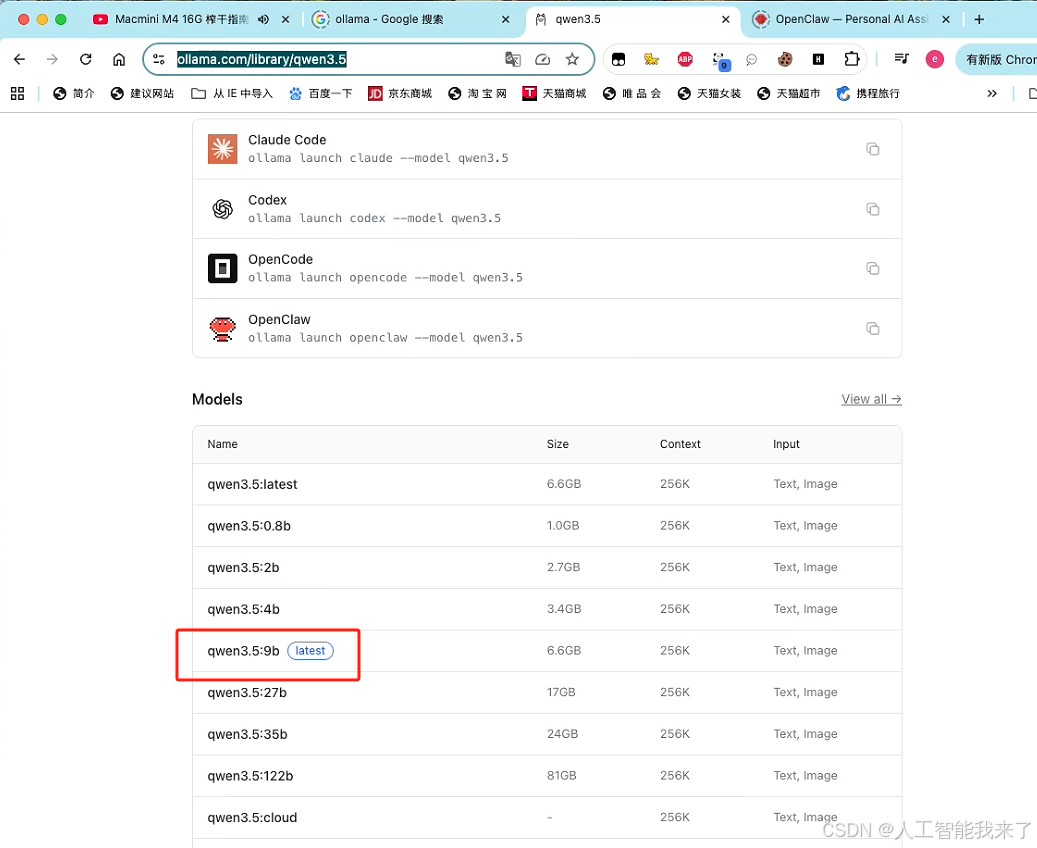

3.16G 内存该如何选模型?实测千问 3.5 (9B)

https://ollama.com/library/qwen3.5

➜ / cd ➜ ~ pwd /Users/holyeyes ➜ ~ ollama list NAME ID SIZE MODIFIED ➜ ~ ollama run qwen3.5:9b pulling manifest pulling dec52a44569a: 100% ▕██████████████████▏ 6.6 GB pulling 7339fa418c9a: 100% ▕██████████████████▏ 11 KB pulling 9371364b27a5: 100% ▕██████████████████▏ 65 B pulling be595b49fe22: 100% ▕██████████████████▏ 475 B verifying sha256 digest writing manifest success >>> Sen

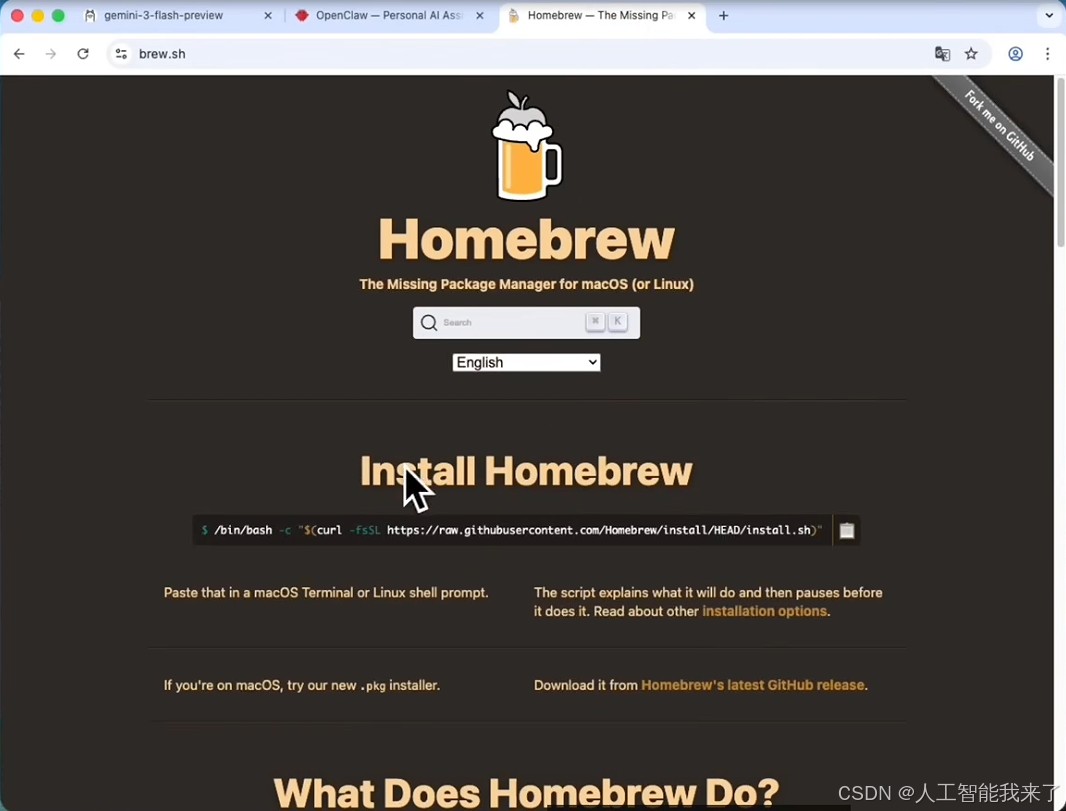

4.安装 macOS 依赖环境 (Homebrew、Node.js、Git)

➜ ~ cd ➜ ~ pwd /Users/holyeyes ➜ ~ /bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

执行结果如下:

==> Checking for `sudo` access (which may request your password)...

Password:

==> This script will install:

/opt/homebrew/bin/brew

/opt/homebrew/share/doc/homebrew

/opt/homebrew/share/man/man1/brew.1

/opt/homebrew/share/zsh/site-functions/_brew

/opt/homebrew/etc/bash_completion.d/brew

/opt/homebrew

/etc/paths.d/homebrew

Press RETURN/ENTER to continue or any other key to abort:

==> /usr/bin/sudo /usr/sbin/chown -R holyeyes:admin /opt/homebrew

==> Downloading and installing Homebrew...

remote: Enumerating objects: 20571, done.

remote: Counting objects: 100% (6436/6436), done.

remote: Compressing objects: 100% (211/211), done.

remote: Total 20571 (delta 6326), reused 6228 (delta 6225), pack-reused 14135 (from 2)

==> /usr/bin/sudo /bin/mkdir -p /etc/paths.d

==> /usr/bin/sudo tee /etc/paths.d/homebrew

/opt/homebrew/bin

==> /usr/bin/sudo /usr/sbin/chown root:wheel /etc/paths.d/homebrew

==> /usr/bin/sudo /bin/chmod a+r /etc/paths.d/homebrew

==> Updating Homebrew...

==> Downloading https://ghcr.io/v2/homebrew/core/portable-ruby/blobs/sha256:cef6f881f516d2cdbd0a5bfc7e20318da8b047cf2674ee27c5d4858d3ecd6430

######################################################################### 100.0%

==> Pouring portable-ruby-4.0.1.arm64_big_sur.bottle.tar.gz

Updated 2 taps (homebrew/core and homebrew/cask).

==> Installation successful!

==> Homebrew has enabled anonymous aggregate formulae and cask analytics.

Read the analytics documentation (and how to opt-out) here:

https://docs.brew.sh/Analytics

No analytics data has been sent yet (nor will any be during this install run).

==> Homebrew is run entirely by unpaid volunteers. Please consider donating:

https://github.com/Homebrew/brew#donations

==> Next steps:

- Run brew help to get started

- Further documentation:

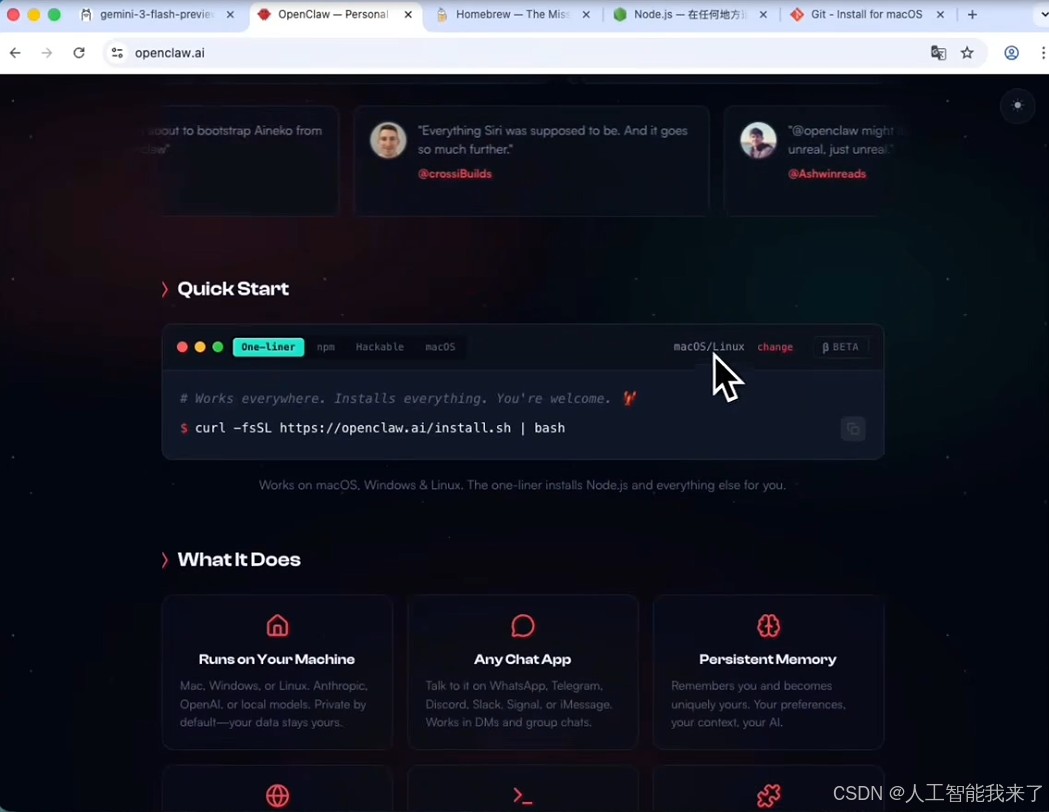

https://docs.brew.sh5.一键安装开源客户端面板

➜ ~ pwd

/Users/holyeyes

➜ ~ curl -fsSL https://openclaw.ai/install.sh | bash

🦞 OpenClaw Installer

The only crab in your contacts you actually want to hear from. 🦞

✓ Detected: macos

Install plan

OS: macos

Install method: npm

Requested version: latest

[1/3] Preparing environment

✓ Homebrew already installed

· Node.js not found, installing it now

· Installing Node.js via Homebrew

To relink, run:

brew unlink node@22 && brew link node@22

✓ Node.js installed

· Active Node.js: v22.22.1 (/opt/homebrew/opt/node@22/bin/node)

· Active npm: 10.9.4 (/opt/homebrew/opt/node@22/bin/npm)

[2/3] Installing OpenClaw

✓ Git already installed

· Installing OpenClaw v2026.3.13

✓ OpenClaw npm package installed

✓ OpenClaw installed

[3/3] Finalizing setup

🦞 OpenClaw installed successfully (OpenClaw 2026.3.13 (61d171a))!

cracks claws Alright, what are we building?

· Starting setup

🦞 OpenClaw 2026.3.13 (61d171a)

I'm the reason your shell history looks like a hacker-movie montage.

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄

██░▄▄▄░██░▄▄░██░▄▄▄██░▀██░██░▄▄▀██░████░▄▄▀██░███░██

██░███░██░▀▀░██░▄▄▄██░█░█░██░█████░████░▀▀░██░█░█░██

██░▀▀▀░██░█████░▀▀▀██░██▄░██░▀▀▄██░▀▀░█░██░██▄▀▄▀▄██

▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

🦞 OPENCLAW 🦞

┌ OpenClaw onboarding

│

◇ Security ──────────────────────────────────────────────────────────────╮

│ │

│ Security warning — please read. │

│ │

│ OpenClaw is a hobby project and still in beta. Expect sharp edges. │

│ By default, OpenClaw is a personal agent: one trusted operator │

│ boundary. │

│ This bot can read files and run actions if tools are enabled. │

│ A bad prompt can trick it into doing unsafe things. │

│ │

│ OpenClaw is not a hostile multi-tenant boundary by default. │

│ If multiple users can message one tool-enabled agent, they share that │

│ delegated tool authority. │

│ │

│ If you’re not comfortable with security hardening and access control, │

│ don’t run OpenClaw. │

│ Ask someone experienced to help before enabling tools or exposing it │

│ to the internet. │

│ │

│ Recommended baseline: │

│ - Pairing/allowlists + mention gating. │

│ - Multi-user/shared inbox: split trust boundaries (separate │

│ gateway/credentials, ideally separate OS users/hosts). │

│ - Sandbox + least-privilege tools. │

│ - Shared inboxes: isolate DM sessions (`session.dmScope: │

│ per-channel-peer`) and keep tool access minimal. │

│ - Keep secrets out of the agent’s reachable filesystem. │

│ - Use the strongest available model for any bot with tools or │

│ untrusted inboxes. │

│ │

│ Run regularly: │

│ openclaw security audit --deep │

│ openclaw security audit --fix │

│ │

│ Must read: https://docs.openclaw.ai/gateway/security │

│ │

├─────────────────────────────────────────────────────────────────────────╯

│

◇ I understand this is personal-by-default and shared/multi-user use requires

lock-down. Continue?

│ Yes

│

◇ Onboarding mode

│ QuickStart

│

◇ QuickStart ─────────────────────────╮

│ │

│ Gateway port: 18789 │

│ Gateway bind: Loopback (127.0.0.1) │

│ Gateway auth: Token (default) │

│ Tailscale exposure: Off │

│ Direct to chat channels. │

│ │

├──────────────────────────────────────╯

│

◇ Model/auth provider

│ Skip for now6.安装 MLX (OMLX) 平台,苹果电脑跑模型提速神器!

6.1 https://omlx.ai/

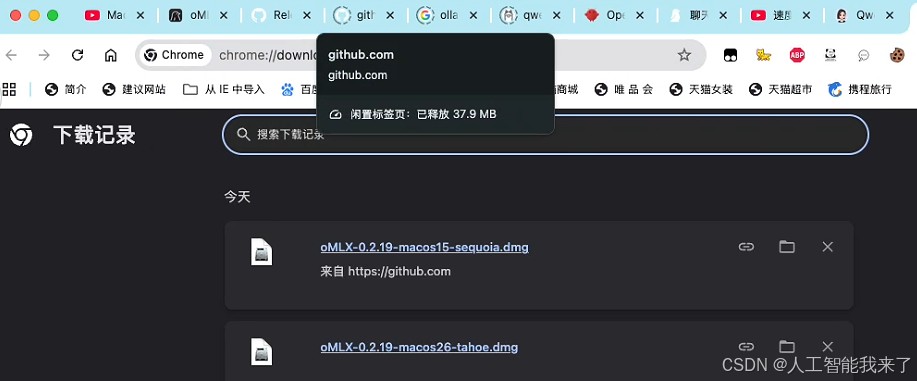

6.2 我能正常用的是第一个

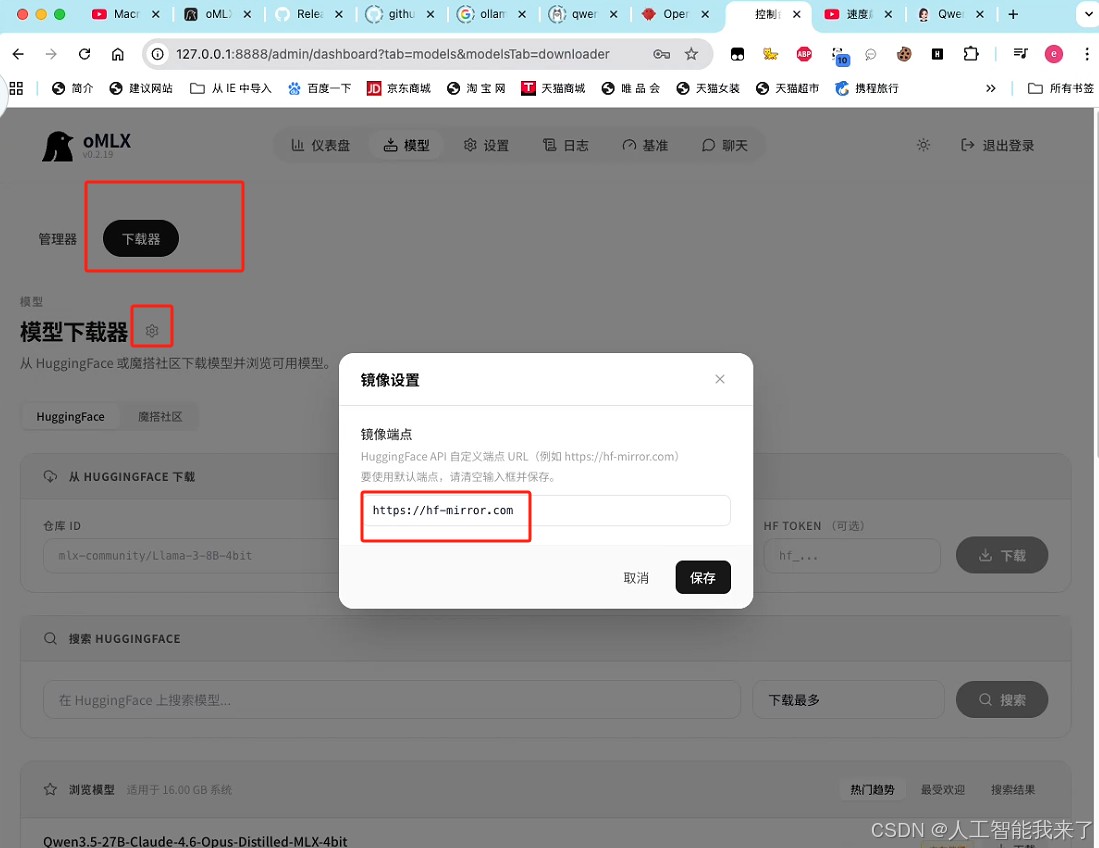

6.3 下载模型,先设置镜像网站,下载速度才快

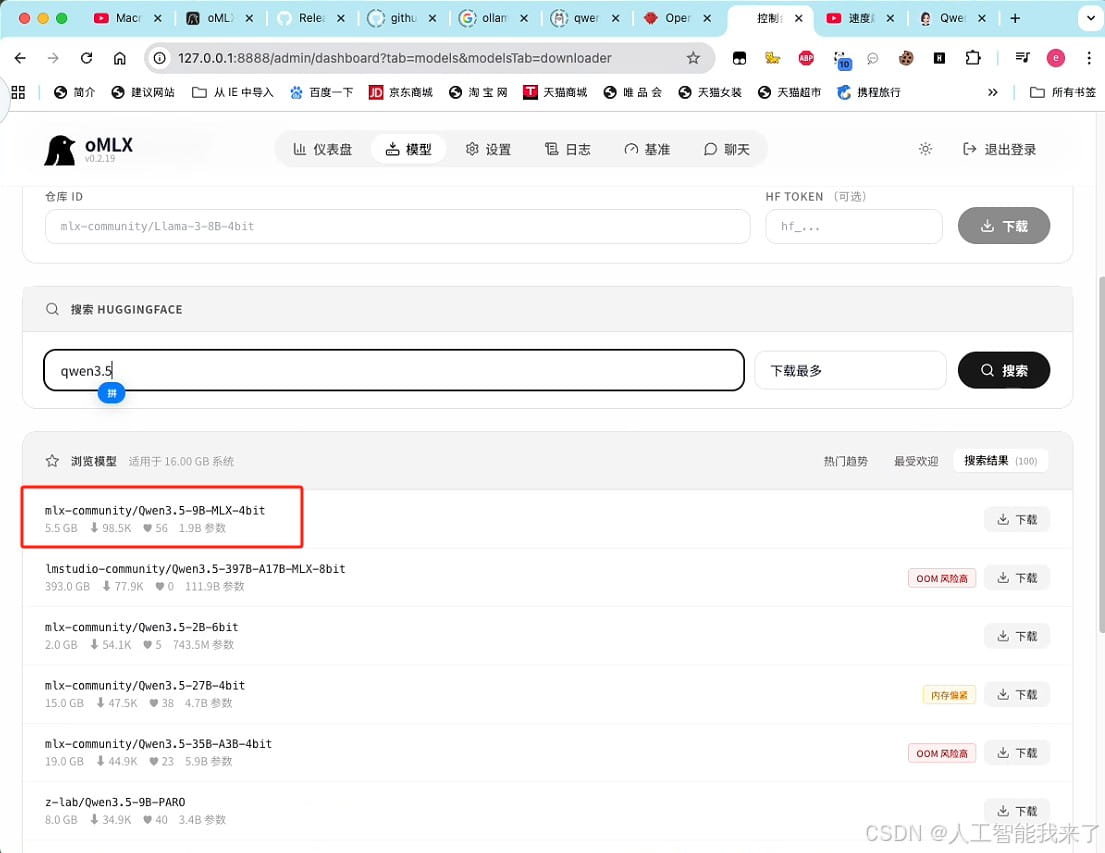

6.4 下载

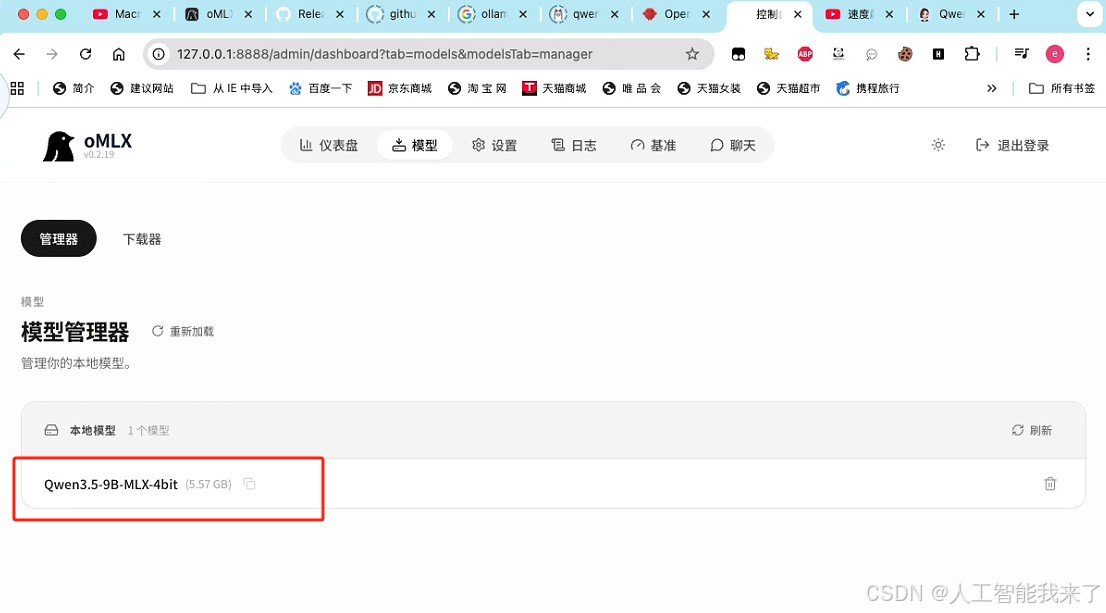

6.5 完成下载后可以看到模型

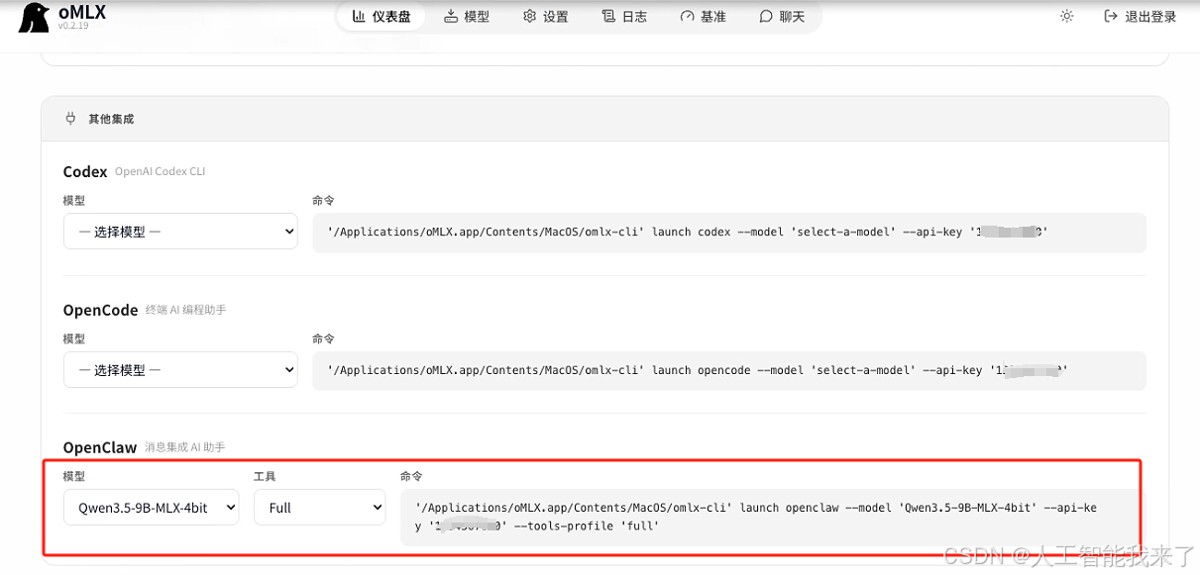

6.6 配置openclaw model参数的自动脚本

Last login: Sun Mar 22 16:49:59 on ttys000

➜ ~ openclaw config

🦞 OpenClaw 2026.3.13 (61d171a) — Greetings, Professor Falken

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄

██░▄▄▄░██░▄▄░██░▄▄▄██░▀██░██░▄▄▀██░████░▄▄▀██░███░██

██░███░██░▀▀░██░▄▄▄██░█░█░██░█████░████░▀▀░██░█░█░██

██░▀▀▀░██░█████░▀▀▀██░██▄░██░▀▀▄██░▀▀░█░██░██▄▀▄▀▄██

▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

🦞 OPENCLAW 🦞

┌ OpenClaw configure

│

◇ Existing config detected ─────────╮

│ │

│ workspace: ~/.openclaw/workspace │

│ model: omlx/Qwen3.5-9B-MLX-4bit │

│ gateway.mode: local │

│ gateway.port: 18789 │

│ gateway.bind: loopback │

│ │

├────────────────────────────────────╯

│

◇ Where will the Gateway run?

│ Local (this machine)

│

◇ Select sections to configure

│ Model

│

◇ Model/auth provider

│ Custom Provider

│

◇ API Base URL

│ http://127.0.0.1:8888/v1

│

◇ How do you want to provide this API key?

│ Paste API key now

│

◇ API Key (leave blank if not required)

│

│

◇ Endpoint compatibility

│ OpenAI-compatible

│

◇ Model ID

│ Qwen3.5-9B-MLX-4bit

│

◇ Verification failed: status 401

│

◇ What would you like to change?

│ Change model

│

◇ Model ID

│ omlx/Qwen3.5-9B-MLX-4bit

│

◇ Verification failed: status 401

│

◇ What would you like to change?

│ Change base URL

│

◇ API Base URL

│ http://127.0.0.1:8888/v1

│

◇ How do you want to provide this API key?

│ Paste API key now

│

◇ API Key (leave blank if not required)

│ xxxxxxxx(与之前设置的一致)

│

◇ Verification successful.

│

◇ Endpoint ID

│ custom-127-0-0-1-8888

│

◇ Model alias (optional)

│ omlx

Configured custom provider: custom-127-0-0-1-8888/omlx/Qwen3.5-9B-MLX-4bit

Config overwrite: /Users/holyeyes/.openclaw/openclaw.json (sha256 302bbefe5d4891362442457b61c553500dc6bdfc0ed4992aa5e2bf83aec39dff -> 4a26c79e37fc83418b80ef48c4b9263bdefa83af0e0fe34702e86db01f028a03, backup=/Users/holyeyes/.openclaw/openclaw.json.bak)

Updated ~/.openclaw/openclaw.json

│

◇ Select sections to configure

│ Continue

│

◇ Control UI ────────────────────────────────────╮

│ │

│ Web UI: http://127.0.0.1:18789/ │

│ Gateway WS: ws://127.0.0.1:18789 │

│ Gateway: reachable │

│ Docs: https://docs.openclaw.ai/web/control-ui │

│ │

├─────────────────────────────────────────────────╯

│

└ Configure complete.

➜ ~ openclaw gateway restart

🦞 OpenClaw 2026.3.13 (61d171a)

Somewhere between 'hello world' and 'oh god what have I built.'

Restarted LaunchAgent: gui/501/ai.openclaw.gateway7 ollma和omlx实测对比

同样的问题:

2,6,12,20,30,(?)

结果:

方案 用时

Ollama 原生 1分50秒

OMLX 加速 10~15秒

速度提升接近 10 倍!

几乎可以做到 秒级响应。

到此这篇关于Macmini M4 openclaw第一集:使用ollama和omlx架构对比分析(保姆级教程)的文章就介绍到这了,更多相关openclaw ollama和omlx架构对比内容请搜索脚本之家以前的文章或继续浏览下面的相关文章,希望大家以后多多支持脚本之家!

相关文章

本文详细介绍了Ollama本地大模型运行框架的安装配置方法,特别是如何实现远程访问,文章从基础安装、配置优化、网络设置、远程访问、服务验证到常见问题解决,逐层深入,为开发2026-04-12

本文详细介绍了Ollama本地大模型运行框架的安装配置方法,特别是如何实现远程访问,文章从基础安装、配置优化、网络设置、远程访问、服务验证到常见问题解决,逐层深入,为开发2026-04-12 Ollama是一个轻量级、易于使用的大模型管理和部署工具,主要用于简化大模型的运行和交互,并且为开发者和用户提供了快速加载,管理和调用多种主流大模型的能力,下面我们就2026-04-12

Ollama是一个轻量级、易于使用的大模型管理和部署工具,主要用于简化大模型的运行和交互,并且为开发者和用户提供了快速加载,管理和调用多种主流大模型的能力,下面我们就2026-04-12

Python + Ollama 本地跑大模型:零成本搭建你的私有 AI 助手

文章介绍Ollama本地AI助手的安装方法,从环境准备、快速上手到实战项目,并探讨了其核心优势,包括零成本、零数据风险、零门槛和零妥协,通过使用Ollama,用户可以在本地运行大2026-04-09 本文主要介绍如何在 Windows 系统快速部署 Ollama 开源大语言模型运行工具,并安装 Open WebUI 结合 cpolar 内网穿透软件,实现在公网环境也能访问你在本地内网搭建的 llam2026-04-07

本文主要介绍如何在 Windows 系统快速部署 Ollama 开源大语言模型运行工具,并安装 Open WebUI 结合 cpolar 内网穿透软件,实现在公网环境也能访问你在本地内网搭建的 llam2026-04-07 本文主要介绍了三种快速下载OLLAMA的方法小结,文中通过图文步骤介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需要的朋友们下面随着小编来一起学习学习吧2026-03-31

本文主要介绍了三种快速下载OLLAMA的方法小结,文中通过图文步骤介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需要的朋友们下面随着小编来一起学习学习吧2026-03-31 本文主要介绍如何使用ollama本地部署deepseek大模型,以及使用WebUI工具界面进行交互,文中通过示例代码介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需2026-03-31

本文主要介绍如何使用ollama本地部署deepseek大模型,以及使用WebUI工具界面进行交互,文中通过示例代码介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需2026-03-31 本文介绍如何通过Docker+Ollama搭建本地AI开发环境,解决云端API调用成本高、延迟大的问题,帮助开发者快速实现本地AI应用开发,无需担心API调用限制和费用问题,感兴趣的可2026-03-30

本文介绍如何通过Docker+Ollama搭建本地AI开发环境,解决云端API调用成本高、延迟大的问题,帮助开发者快速实现本地AI应用开发,无需担心API调用限制和费用问题,感兴趣的可2026-03-30

Windows11下Ollama部署Qwen2.5大模型的实战指南

本文旨在记录 如何在Windows 11 本地环境下,利用 Ollama 部署 Qwen2.5 大模型,并实现 API 调用,无需显卡也能跑”、能够确保隐私安全,有需要的小伙伴可以跟随小编一起了解2026-03-18 与 Ollama 模型交互是使用大语言模型(LLM)进行对话、生成文本或执行任务的核心功能,Ollama 提供两种主要交互方式:通过命令行界面(CLI)的交互式终端和通过 REST API 的2026-03-09

与 Ollama 模型交互是使用大语言模型(LLM)进行对话、生成文本或执行任务的核心功能,Ollama 提供两种主要交互方式:通过命令行界面(CLI)的交互式终端和通过 REST API 的2026-03-09 Ollama 提供了多种方式与模型进行交互,其中最常见的就是通过命令行进行推理操作2026-03-09

Ollama 提供了多种方式与模型进行交互,其中最常见的就是通过命令行进行推理操作2026-03-09

最新评论